The ‘Just One More’ Paradox (student stuff)

12 May, 2024 at 15:50 | Posted in Statistics & Econometrics | Leave a comment.

Here’s a somewhat simplified Python script of the case, and as you can see, the expected median wealth is quickly trending downwards:

Monte Carlo simulation explained (student stuff)

4 May, 2024 at 19:46 | Posted in Statistics & Econometrics | Leave a comment.

The importance of ‘causal spread’

22 Apr, 2024 at 12:18 | Posted in Statistics & Econometrics | Leave a comment

No doubt exists that an entirely different subject has taken over control when it comes to education in scientific methodology in almost the entire field, namely statistics … The value of the statistical regulatory system should of course not be questioned, but it should not be forgotten that other forms of reflection are also cultivated in the land of science. No single subject can claim hegemony…

John Maynard Keynes … points to something that can be called ‘causal spread.’ To gain knowledge of humanity, the young person needs to encounter people of various kinds. Variation is equally important in all areas of knowledge formation — encountering diversity is enlightening.

This seems entirely obvious, but the viewpoint has hardly been admitted into a textbook on statistics. There, qualitative richness does not apply, only the quantitative. Keynes, on the other hand, unabashedly states that the number of cases examined is of little importance … Those assessing credibility may perhaps look at the probability figure as decisive. But sometimes the figure lacks a solid foundation, and then it is not worth much. When the assessor gets hold of additional material, conclusions may be turned upside down. The risk of this must also be taken into account.

When yours truly studied philosophy and mathematical logic in Lund in the 1980s, Sören Halldén was a great source of inspiration. He still is.

Applied econometrics — a messy business

16 Apr, 2024 at 15:34 | Posted in Statistics & Econometrics | Comments Off on Applied econometrics — a messy businessDo you believe that 10 to 20% of the decline in crime in the 1990s was caused by an increase in abortions in the 1970s? Or that the murder rate would have increased by 250% since 1974 if the United States had not built so many new prisons? Did you believe predictions that the welfare reform of the 1990s would force 1,100,000 children into poverty?

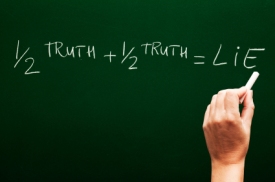

If you were misled by any of these studies, you may have fallen for a pernicious form of junk science: the use of mathematical modeling to evaluate the impact of social policies. These studies are superficially impressive. Produced by reputable social scientists from prestigious institutions, they are often published in peer reviewed scientific journals. They are filled with statistical calculations too complex for anyone but another specialist to untangle. They give precise numerical “facts” that are often quoted in policy debates. But these “facts” turn out to be will o’ the wisps …

These predictions are based on a statistical technique called multiple regression that uses correlational analysis to make causal arguments … The problem with this, as anyone who has studied statistics knows, is that correlation is not causation. A correlation between two variables may be “spurious” if it is caused by some third variable. Multiple regression researchers try to overcome the spuriousness problem by including all the variables in analysis. The data available for this purpose simply is not up to this task, however, and the studies have consistently failed.

Mainstream economists often hold the view that if you are critical of econometrics it can only be because you are a sadly misinformed and misguided person who dislikes and does not understand much of it.

As Goertzel’s eminent article shows, this is, however, nothing but a gross misapprehension.

To apply statistical and mathematical methods to the real-world economy, the econometrician has to make some quite strong, limiting, and unreal assumptions (completeness, homogeneity, stability, measurability, independence, linearity, additivity, etc., etc.)

To apply statistical and mathematical methods to the real-world economy, the econometrician has to make some quite strong, limiting, and unreal assumptions (completeness, homogeneity, stability, measurability, independence, linearity, additivity, etc., etc.)

Building econometric models can’t be a goal in itself. Good econometric models are means that make it possible for us to infer things about the real-world systems they ‘represent.’ If we can’t show that the mechanisms or causes that we isolate and handle in our econometric models are ‘exportable’ to the real world, they are of limited value to our understanding, explanations or predictions of real-world economic systems.

Real-world social systems are usually not governed by stable causal mechanisms or capacities. The kinds of ‘laws’ and relations that econometrics has established, are laws and relations about entities in models that presuppose causal mechanisms and variables — and the relationship between them — being linear, additive, homogenous, stable, invariant and atomistic. But — when causal mechanisms operate in the real world they only do it in ever-changing and unstable combinations where the whole is more than a mechanical sum of parts. Since econometricians haven’t been able to convincingly warrant their assumptions of homogeneity, stability, invariance, independence, and additivity as being ontologically isomorphic to real-world economic systems, I remain doubtful of the scientific aspirations of econometrics.

There are fundamental logical, epistemological and ontological problems in applying statistical methods to a basically unpredictable, uncertain, complex, unstable, interdependent, and ever-changing social reality. Methods designed to analyse repeated sampling in controlled experiments under fixed conditions are not easily extended to an organic and non-atomistic world where time and history play decisive roles.

Econometric modelling should never be a substitute for thinking. From that perspective, it is really depressing to see how much of Keynes’ critique of the pioneering econometrics in the 1930s-1940s is still relevant today. And that is also a reason why social scientists like Goertzl and yours truly keep on criticizing it.

The general line you take is interesting and useful. It is, of course, not exactly comparable with mine. I was raising the logical difficulties. You say in effect that, if one was to take these seriously, one would give up the ghost in the first lap, but that the method, used judiciously as an aid to more theoretical enquiries and as a means of suggesting possibilities and probabilities rather than anything else, taken with enough grains of salt and applied with superlative common sense, won’t do much harm. I should quite agree with that. That is how the method ought to be used.

Keynes, letter to E.J. Broster, December 19, 1939

Feynman’s trick (student stuff)

9 Apr, 2024 at 11:43 | Posted in Statistics & Econometrics | Comments Off on Feynman’s trick (student stuff).

Difference in Differences (student stuff)

10 Mar, 2024 at 09:36 | Posted in Statistics & Econometrics | Comments Off on Difference in Differences (student stuff).

Vad ALLA bör veta om statistik

4 Mar, 2024 at 14:35 | Posted in Statistics & Econometrics | Comments Off on Vad ALLA bör veta om statistik

Även om man inte själv har tänkt sig producera statistik, bör alla som ägnar sig åt akademiska studier i någon form ha nog kunskaper för att förstå och värdera statistik på ett korrekt sätt. Fallgroparna är många. Den här boken hjälper med förtjänstfull pedagogisk handledning att få läsaren att undvika dessa.

Data analysis for social sciences (student stuff)

28 Feb, 2024 at 10:29 | Posted in Statistics & Econometrics | Comments Off on Data analysis for social sciences (student stuff).

When I teach statistics, I always try to recommend good supplementary material on YouTube to my students. This is a good example.

20 Best Econometrics Blogs and Websites in 2024

26 Feb, 2024 at 11:22 | Posted in Statistics & Econometrics | 1 CommentYours truly, of course, feels truly honoured to find himself on the list of the world’s 20 Best Econometrics Blogs and Websites.

3. Cambridge Econometrics Blog

9. How the (Econometric) Sausage is Made

14. Lars P Syll

Pålsson Syll received a PhD in economic history in 1991 and a PhD in economics in 1997, both at Lund University. He became an associate professor in economic history in 1995 and has since 2004 been a professor of social science at Malmö University. His primary research areas have been in the philosophy, history, and methodology of economics.

Pålsson Syll received a PhD in economic history in 1991 and a PhD in economics in 1997, both at Lund University. He became an associate professor in economic history in 1995 and has since 2004 been a professor of social science at Malmö University. His primary research areas have been in the philosophy, history, and methodology of economics.

Econometrics — a second-best explanatory practice

26 Feb, 2024 at 09:11 | Posted in Statistics & Econometrics | 1 Comment

While appeal to R squared is a common rhetorical device, it is a very tenuous connection to any plausible explanatory virtues for many reasons. Either it is meant to be merely a measure of predictability in a given data set or it is a measure of causal influence. In either case it does not tell us much about explanatory power. Taken as a measure of predictive power, it is limited in that it predicts variances only. But what we mostly want to predict is levels, about which it is silent. In fact, two models can have exactly the same R squared and yet describe regression lines with very different slopes, the natural predictive measure of levels. Furthermore even in predicting variance, it is entirely dependent on the variance in the sample—if a covariate shows no variation, then it cannot predict anything. This leads to getting very different measures of explanatory power across samples for reasons not having any obvious connection to explanation.

Taken as a measure of causal explanatory power, R squared does not fare any better. The problem of explaining variances rather than levels shows up here as well—if it measures causal influence, it has to be influences on variances. But we often do not care about the causes of variance in economic variables but instead about the causes of levels of those variables about which it is silent. Similarly, because the size of R squared varies with variance in the sample, it can find a large effect in one sample and none in another for arbitrary, noncausal reasons. So while there may be some useful epistemic roles for R squared, measuring explanatory power is not one of them.

Although in a somewhat different context, Jon Elster makes basically the same observation as Kincaid:

Consider two elections, A and B. For each of them, identify the events that cause a given percentage of voters to turn out. Once we have thus explained the turnout in election A and the turnout in election B, the explanation of the difference (if any) follows automatically, as a by-product. As a bonus, we might be able to explain whether identical turnouts in A and B are accidental, that is, due to differences that exactly offset each other, or not. In practice, this procedure might be too demanding. The data or the available theories might not allow us to explain the phenomena “in and of themselves.” We should be aware, however, that if we do resort to explanation of variation, we are engaging in a second-best explanatory practice.

Modern econometrics is fundamentally based on assuming — usually without any explicit justification — that we can gain causal knowledge by considering independent variables that may have an impact on the variation of a dependent variable. As argued by both Kincaid and Elster, this is, however, far from self-evident. Often the fundamental causes are constant forces that are not amenable to the kind of analysis econometrics supplies us with. As Stanley Lieberson has it in Making It Count:

One can always say whether, in a given empirical context, a given variable or theory accounts for more variation than another. But it is almost certain that the variation observed is not universal over time and place. Hence the use of such a criterion first requires a conclusion about the variation over time and place in the dependent variable. If such an analysis is not forthcoming, the theoretical conclusion is undermined by the absence of information …

Moreover, it is questionable whether one can draw much of a conclusion about causal forces from simple analysis of the observed variation … To wit, it is vital that one have an understanding, or at least a working hypothesis, about what is causing the event per se; variation in the magnitude of the event will not provide the answer to that question.

Trygve Haavelmo made a somewhat similar point back in 1941 when criticizing the treatment of the interest variable in Tinbergen’s regression analyses. The regression coefficient of the interest rate variable being zero was according to Haavelmo not sufficient for inferring that “variations in the rate of interest play only a minor role, or no role at all, in the changes in investment activity.” Interest rates may very well play a decisive indirect role by influencing other causally effective variables. And:

the rate of interest may not have varied much during the statistical testing period, and for this reason the rate of interest would not “explain” very much of the variation in net profit (and thereby the variation in investment) which has actually taken place during this period. But one cannot conclude that the rate of influence would be inefficient as an autonomous regulator, which is, after all, the important point.

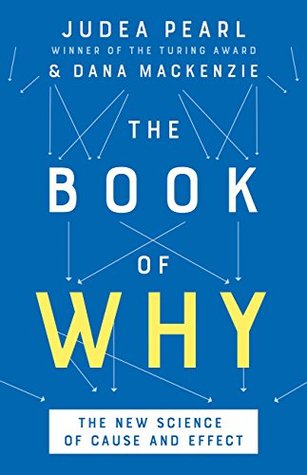

This problem of ‘nonexcitation’ — when there is too little variation in a variable to say anything about its potential importance, and we can’t identify the reason for the factual influence of the variable being ‘negligible’ — strongly confirms that causality in economics and other social sciences can never solely be a question of statistical inference. Causality entails more than predictability, and to really in-depth explain social phenomena requires theory.

Analysis of variation — the foundation of all econometrics — can never in itself reveal how these variations are brought about. Only when we can tie actions, processes, or structures to the statistical relations detected, can we say that we are getting relevant explanations of causation. Too much in love with axiomatic-deductive modelling, mainstream economists especially tend to forget that accounting for causation — how causes bring about their effects — demands deep subject-matter knowledge and acquaintance with the intricate fabrics and contexts. As Keynes already argued in his A Treatise on Probability, statistics (and econometrics) should primarily be seen as means to describe patterns of associations and correlations, means that we may use as suggestions of possible causal relations. Forgetting that, economists will continue to be stuck with a second-best explanatory practice.

Potential outcomes and regression (student stuff)

19 Feb, 2024 at 15:04 | Posted in Statistics & Econometrics | Comments Off on Potential outcomes and regression (student stuff).

Econometric modeling and inference

27 Jan, 2024 at 11:40 | Posted in Statistics & Econometrics | Comments Off on Econometric modeling and inference

The impossibility of proper specification is true generally in regression analyses across the social sciences, whether we are looking at the factors affecting occupational status, voting behavior, etc. The problem is that as implied by the three conditions for regression analyses to yield accurate, unbiased estimates, you need to investigate a phenomenon that has underlying mathematical regularities – and, moreover, you need to know what they are. Neither seems true … Even if there was some constancy, the processes are so complex that we have no idea of what the function looks like.

Researchers recognize that they do not know the true function and seem to treat, usually implicitly, their results as a good-enough approximation. But there is no basis for the belief that the results of what is run in practice is anything close to the underlying phenomenon, even if there is an underlying phenomenon. This just seems to be wishful thinking. Most regression analysis research doesn’t even pay lip service to theoretical regularities. But you can’t just regress anything you want and expect the results to approximate reality. And even when researchers take somewhat seriously the need to have an underlying theoretical framework … they are so far from the conditions necessary for proper specification that one can have no confidence in the validity of the results.

Most work in econometrics and regression analysis is done on the assumption that the researcher has a theoretical model that is ‘true.’ Based on this belief of having a correct specification for an econometric model or running a regression, one proceeds as if the only problem remaining to solve has to do with measurement and observation.

The problem is that there is little to support the perfect specification assumption. Looking around in social science and economics we don’t find a single regression or econometric model that lives up to the standards set by the ‘true’ theoretical model — and there is nothing that gives us reason to believe things will be different in the future.

To think that we can construct a model where all relevant variables are included and correctly specify the functional relationships that exist between them is not only a belief with little support but a belief impossible to support.

The theories we work with when building our econometric regression models are insufficient. No matter what we study, there are always some variables missing, and we don’t know the correct way to functionally specify the relationships between the variables.

Every regression model constructed is misspecified. There is always an endless list of possible variables to include and endless possible ways to specify the relationships between them. So every applied econometrician comes up with his own specification and ‘parameter’ estimates. The econometric Holy Grail of consistent and stable parameter values is nothing but a dream. The theoretical conditions that have to be fulfilled for regression analysis and econometrics to really work are nowhere even closely met in reality. Making outlandish statistical assumptions does not provide a solid ground for doing relevant social science and economics. Although regression analysis and econometrics have become the most used quantitative methods in social sciences and economics today, it’s still a fact that the inferences made from them are of strongly questionable validity.

The econometric art as it is practiced at the computer … involves fitting many, perhaps thousands, of statistical models….There can be no doubt that such a specification search invalidates the traditional theories of inference … All the concepts of traditional theory utterly lose their meaning by the time an applied researcher pulls from the bramble of computer output the one thorn of a model he likes best, the one he chooses to portray as a rose.

Why quasi-experimental evaluations fail

19 Jan, 2024 at 11:18 | Posted in Statistics & Econometrics | Comments Off on Why quasi-experimental evaluations failEvaluation research tends to be method-driven. Everything needs to be apportioned as an ‘input’ or ‘output’, so that the programme itself becomes a ‘variable’, and the chief research interest in it is to inspect the dosage in order to see that a good proper spoonful has been applied …

The quasi-exprimental conception is again deficient. Communities clearly differ. They also have attributes that are not reducible to those of the individual members … A particular programme will only ‘work’ if the contextual conditions into which it is inserted are conducive to its operation, as it is implemented. Quasi-experimentation’s method of random allocation, or efforts to mimic it as closely as possible, represent an endeavour to cancel out differences, to find out whether a programme will work without the added advantage of special conditions liable to enable it to do so. This is absurd. It is an effort to write out what is essential to a programme—social conditions favourable to its success. These are of critical importance to sensible evaluation, and the policy maker needs to know about them. Making no attempt to identify especially conducive conditions and in effect ensuring that the general, and therefore the unconducive, are fully written into the programme almost guarantees the mixed results we characteristically find.

Design-based vs model-based inferences

17 Jan, 2024 at 19:24 | Posted in Statistics & Econometrics | Comments Off on Design-based vs model-based inferences

Following the introduction of the model-based inferential framework by Fisher and the introduction of the design-based inferential framework by Neyman [and Pearson], survey sampling statisticians began to identify their respective weaknesses.

With regard to the model-based framework, sampling statisticians found that conditioning on all stratification and selection/recruitment variables, and allowing for their potential interactions with independent variables, complicated model specification (Pfeffermann, 1996). Such conditioning also complicated interpretation of substantively interesting model parameters and swallowed needed degrees of freedom (Pfeffermann, Krieger, & Rinott, 1998). Additionally, such conditioning was found to be error prone; particularly if little was known about the sample selection mechanism, relevant selection/recruitment variables could easily be unknowingly omitted …

With regard to the pure design-based framework, sampling statisticians felt limited by restrictions on the type of parameters that could be estimated (simple statistics such as means, totals, and ratios) and the type of inference that could be obtained (descriptive, finite population inference; Graubard & Korn, 2002; Smith, 1993). Additionally, statisticians increasingly realized that the design-based framework’s arguably greatest purported advantage (according to Neyman, 1923, 1934) is not entirely true: it does not provide inference free of all modeling assumptions. True, the design-based framework does not

involve explicit attempts to write out a model for the substantive process that generated yvalues in an infinite population. However, the sampling weight itself entails an implicit (or hidden) model relating probabilities of selection and the outcome (Little, 2004, p. 550). Adjustments to the weight for nonsampling errors such as under-coverage and nonresponse require further implicit modeling assumptions …

Are RCTs — really — the best way to establish causality?

15 Jan, 2024 at 10:26 | Posted in Statistics & Econometrics | 1 Comment

The best method is always the one that yields the most convincing and relevant answers in the context at hand. We all have our preferred methods that we think are underused. My own personal favorites are cross-tabulations and graphs that stay close to the data; the hard work lies in deciding what to put into them and how to process the data to learn something that we did not know before, or that changes minds. An appropriately constructed picture or cross-tabulation can undermine the credibility of a widely believed causal story, or enhance the credibility of a new one; such evidence is more informative about causes than a paper with the word “causal” in its title. The art is in knowing what to show. But I don’t insist that others should work this way too.

The imposition of a hierarchy of evidence is both dangerous and unscientific. Dangerous because it automatically discards evidence that may need to be considered, evidence that might be critical. Evidence from an RCT gets counted even if the population it covers is very different from the population where it is to be used, if it has only a handful of observations, if many subjects dropped out or refused to accept their assignments, or if there is no blinding and knowing you are in the experiment can be expected to change the outcome. Discounting trials for these flaws makes sense, but doesn’t help if it excludes more informative non-randomized evidence. By the hierarchy, evidence without randomization is no evidence at all, or at least is not “rigorous” evidence.

The almost religious belief with which its propagators — including ‘Nobel prize’ winners like Duflo, Banerjee and Kremer — portray it, cannot hide the fact that RCTs cannot be taken for granted to give generalizable results. That something works somewhere is no warranty for us to believe it works for us here or that it generally works. Whether an RCT is externally valid or not, is never a question of study design. What ‘works’ is always a question of context.

Leaning on an interventionist approach often means that instead of posing interesting questions on a social level, the focus is on individuals. Instead of asking about structural socio-economic factors behind, e.g., gender or racial discrimination, the focus is on the choices individuals make. Duflo et consortes want to give up on ‘big ideas’ like political economy and institutional reform and instead solve more manageable problems ‘the way plumbers do.’

Yours truly is far from sure that is the right way to move economics forward and make it a relevant and realist science. The ‘identification problem’ is certainly more manageable in plumbing, but we should never forget that clinical trials and medical studies have another dimensionality and heterogeneity than what we encounter in most social and economic contexts.

A plumber can fix minor leaks in your system, but if the whole system is rotten, something more than good old-fashioned plumbing is needed. The big social and economic problems we face today will not be solved by plumbers performing interventions or manipulations in the form of RCTs.

The present RCT idolatry is dangerous. Believing randomization is the only way to achieve scientific validity blinds people to searching for and using other methods that in many contexts are better. Insisting on using only one tool often means using the wrong tool.

The present RCT idolatry is dangerous. Believing randomization is the only way to achieve scientific validity blinds people to searching for and using other methods that in many contexts are better. Insisting on using only one tool often means using the wrong tool.

Randomization is not a panacea. It is not the best method for all questions and circumstances. Proponents of randomization make claims about its ability to deliver causal knowledge that is simply wrong. There are good reasons to share Deeaton’s scepticism on the now popular — and ill-informed — view that randomization is the only valid and the best method on the market. It is not.

Blog at WordPress.com.

Entries and Comments feeds.